🧩 Vector Embeddings: How AI Finds Your Lost Thoughts

10/29/2025

When you talk to ChatGPT and say something like,

“Remind me of that movie where robots learn empathy,”

it somehow knows you mean Wall-E or Her — even if you never said their names.

No, it’s not reading your mind (not yet).

It’s navigating a massive mental map built on something called vector embeddings — a fancy way of saying “turning meaning into math.”

Let’s break it down — simply, weirdly, and maybe a little beautifully.

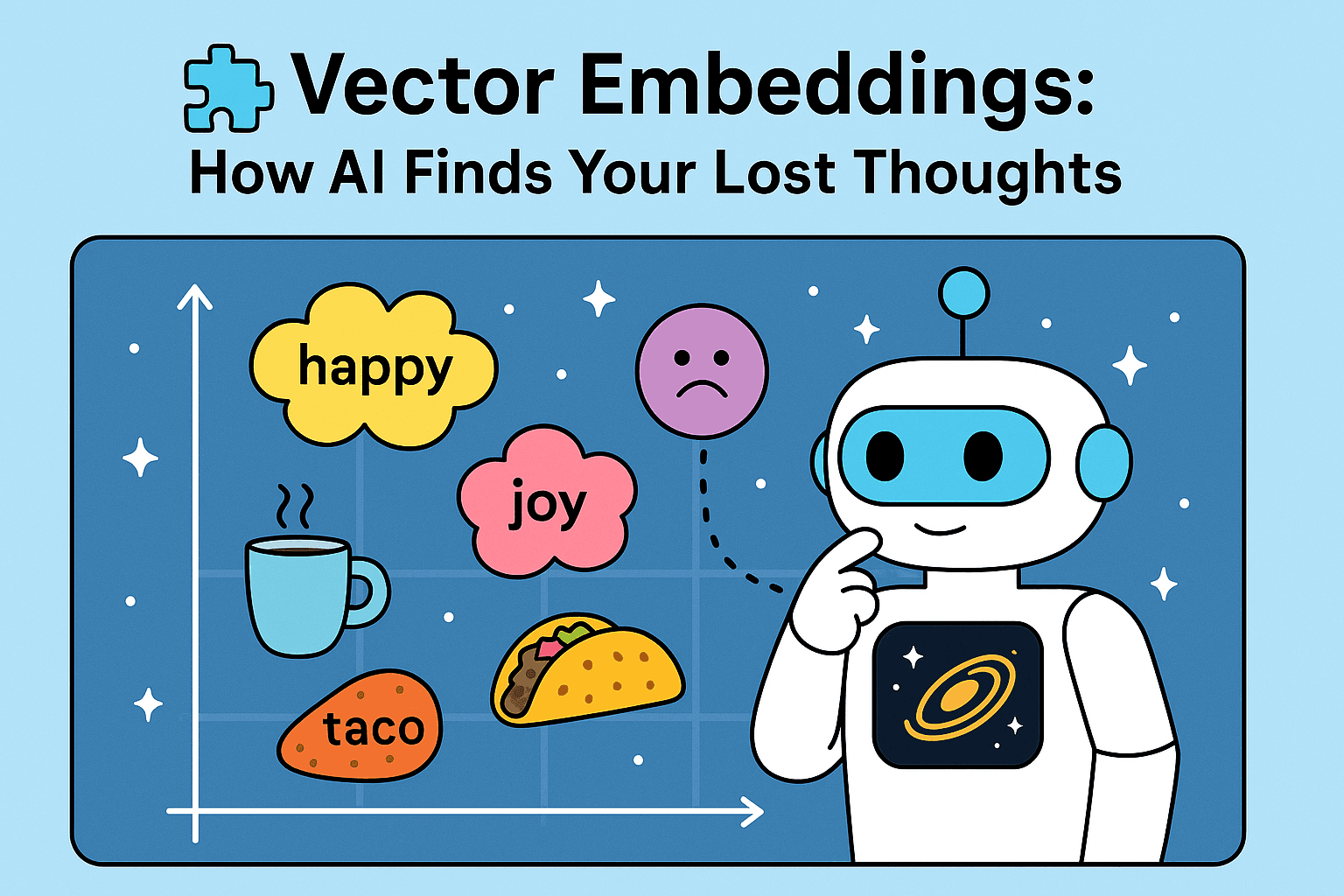

🧠 Imagine a World Made of Meaning

Picture every idea you’ve ever had — tacos, sadness, optimism, quantum physics — as a tiny dot in an enormous galaxy.

In this galaxy, distance equals similarity.

- “Happy” and “joyful” are next-door neighbors.

- “Taco” lives close to “burrito.”

- “Existential dread” is suspiciously near “Monday meetings.”

That galaxy is what AI builds using embeddings — each word, phrase, or sentence is turned into a list of numbers (a vector), and their relative positions define meaning.

🔢 Turning Thoughts Into Numbers

When AI reads a sentence like:

“I love warm coffee on rainy mornings.”

It doesn’t store it as text.

It converts it into something like:

[0.12, -0.45, 0.98, 0.23, ...]

Each number represents a dimension of meaning — tone, emotion, context, relationships, all mashed together.

The result?

Your thoughts now live in a multi-dimensional neighborhood where everything that “feels similar” lives nearby.

So if you later ask,

“What do people like to drink when it rains?”

the AI doesn’t need an exact keyword match.

It just looks around your “rainy morning” neighborhood and finds that coffee is chilling right there.

🗺️ The Geography of Ideas

Think of embeddings as the world map of AI’s memory.

- Europe might be “food.”

- Asia might be “emotions.”

- North America might be “technology.”

And somewhere between them? Fusion cuisine and nostalgia.

This is why AI can connect seemingly unrelated ideas — because somewhere, deep in that multidimensional space, “comfort food” overlaps with “rainy day feelings.”

It’s not guessing.

It’s walking through its mental city with a compass made of math.

🤖 Why It Matters

Embeddings power almost everything that feels intuitive in AI:

- Semantic search: finding meaning, not keywords

- Recommendation systems: “You liked this, so you might like that”

- Chat memory: connecting context across turns

- Clustering: grouping similar ideas or documents

They’re how AI remembers what you meant, even when you don’t remember how you said it.

🌌 Final Thought

Vector embeddings are the unsung poets of artificial intelligence.

They don’t speak, but they map.

They don’t reason, but they remember — through proximity, through meaning, through quiet math.

So the next time your AI friend finishes your thought before you do,

just know: somewhere in its invisible galaxy, your lost idea was already waiting.